Hello!

I'm new to MTurk as a requester and looking to get some MTurk workers to pick up my hits. I'm still trying to improve my image annotation tool and work out our best to balance reward and worker qualifications.

This is my hit, please search "Segment and label the objects in this image (Tool fixed)"

Title: Segment and label the objects in this image (Tool fixed)

Description: Please look at this image, outline any objects that belong to one of our target object classed and select the object's class.

Reward: 0.08

Assignment Duration: 1 hour

Auto Approval Delay: 2 days

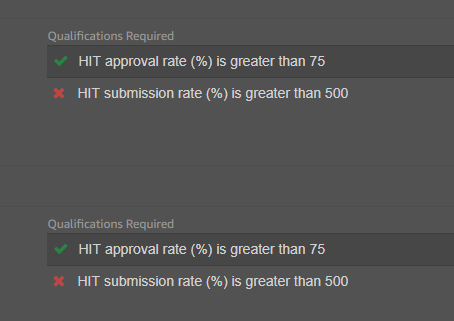

Qualification 1: user must have > 75% approval rate

Qualification 2: user must have submitted > 500 assignment

I had a mistake when first posting and so only attempt the job with (Tool Fixed) in the title. I can't work out how to delete the previous one as I over wrote one of the files with HIT ids ...noob.

Any feedback much appreciated - thanks!

I'm new to MTurk as a requester and looking to get some MTurk workers to pick up my hits. I'm still trying to improve my image annotation tool and work out our best to balance reward and worker qualifications.

This is my hit, please search "Segment and label the objects in this image (Tool fixed)"

Title: Segment and label the objects in this image (Tool fixed)

Description: Please look at this image, outline any objects that belong to one of our target object classed and select the object's class.

Reward: 0.08

Assignment Duration: 1 hour

Auto Approval Delay: 2 days

Qualification 1: user must have > 75% approval rate

Qualification 2: user must have submitted > 500 assignment

I had a mistake when first posting and so only attempt the job with (Tool Fixed) in the title. I can't work out how to delete the previous one as I over wrote one of the files with HIT ids ...noob.

Any feedback much appreciated - thanks!